This is the story of how two Silicon Valley techies started developing BearID, an open source deep learning application to identify individual brown bears. This is a summary of a longer story originally shared on the Hypraptive blog. Individual posts from the blog are referenced below.

In late 2016, Mary and I were taking some classes on deep learning and watching the Brooks Falls Live Cam (see “Guilty Pleasures“). Inspiration hit us to combine these two interests by developing a deep learning application to automatically identify bears from photos or videos. The initial focus was on the brown bears (Ursus arctos) present in the Brooks River area of Katmai National Park (see “The Bears of Brooks River“).

The first thing we wanted to understand was how humans identify bears (see “Bear Necessities“). Next we researched other animal identification projects (see “Deep Learning in the Wild“). Finally we settled on the approach we would start with, based on a human face recognition application from Google, called FaceNet (See “FaceNet for Bears“).

The FaceNet algorithm has four steps:

- Find the face

- Reorient each face

- Encode the face

- Match the face

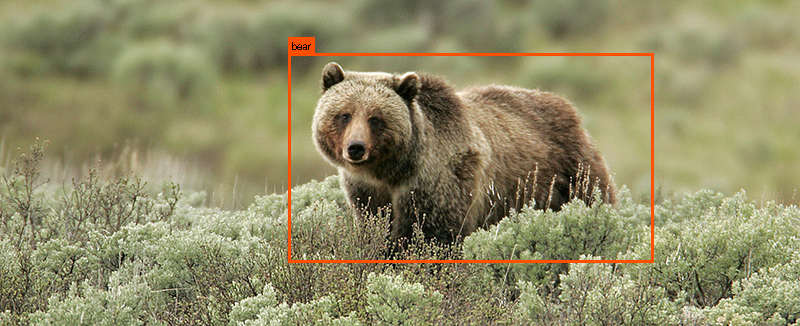

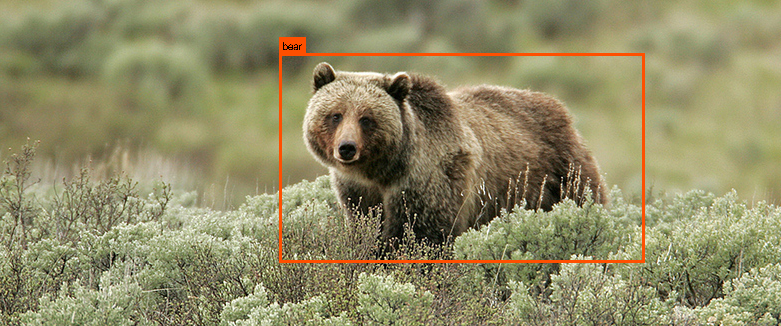

For step 1 we examined a few different tools, including YOLO (see “Find the Bears: YOLO“) and Dlib Toolkit (see “Find the Bears: dlib“). To find the bear’s face, we tried training our own detector using Histogram of Oriented Gradients (HOG) in the Dlib Toolkit (see “Bear Face Detector“). Eventually we came across a deep learning example in the Dlib Toolkit called the Dog Hipsterizer. It turned out to work pretty well for bears (see “Hipster Bears“)!

With a few modifications, we were able to use the Dog Hipsterizer example to find bear faces and landmarks (e. g. eyes and nose), we called this application bearface. Since we were starting to run deep learning neural networks, we ended up building a deep learning computer. After loading up all the necessary software on out shiny new machine, affectionately called Otis, we tackled step 2. We used the output from bearface to reorient the face and crop it into a face chip. We called this application bearchip (see “Bear Chipsterizer“).

The final two steps of the algorithm were realized using the Dlib Toolkit deep metric learning face recognition example and Support Vector Machine (SVM) routines. We called these two applications bearembed and bearsvm. We ran through all the steps and trained the necessary networks. Finally we put it all together to create the bearid application, which takes in a photo and outputs the ID of the bear in the photo (see “BearID 1.0“).

After completing BearID 1.0, we added in the ability to pull face chips from videos (see “Bear Face Chips From Videos“). We also cataloged how many face images we had of each bear from Brooks Falls (see “The Many Faces of Brooks Falls“).

In August of 2017, Mary and I were introduced to Melanie by Steph O’Donnell, community manager at WILDLABS.NET. At the time, Melanie was already studying brown bears in the field and had started a similar project with the goal to automatically identify bears from photos and camera traps. After a few discussions, we decided to join forces and form the BearID Project with the mission to develop noninvasive technologies to identify and monitor bears, facilitating their conservation.

Mary and I continued collecting data and retraining our network (see “Collecting and Labelling Data“). We also cataloged the first batch of data we received from Melanie’s field work in Glendale Cove, British Columbia (see “The Bears of Glendale Cove, BC“). As the bears are starting to emerge from their dens for the 2018 season, we still have a lot of work to do!

Follow along with us on our adventure or see how you can get involved with the BearID Project.

Pingback:BearID Project Uses Facial Recognition to Identify and Monitor Bears « Adafruit Industries – Makers, hackers, artists, designers and engineers!